Traditional music-recommendation techniques are based on the concept of collaborative filtering (CF) which leverages the listening patterns of many users. If enough users listen to artist X and artist Y, then should a user listen to artist X, the system would recommend artist Y to that user. While this technique is very effective, it is not able to recommend new songs or artists since there is no listening history to draw upon.

This research seeks to tackle music recommendation for new songs and artists by making recommendations that would be solely based on the audio content, rather than metadata such as genre, artist, or using the listening histories. The study proposes a distance metric, based solely on raw audio, which in turn could be used as the basis upon which to recommend songs that would best reflect the user’s library.

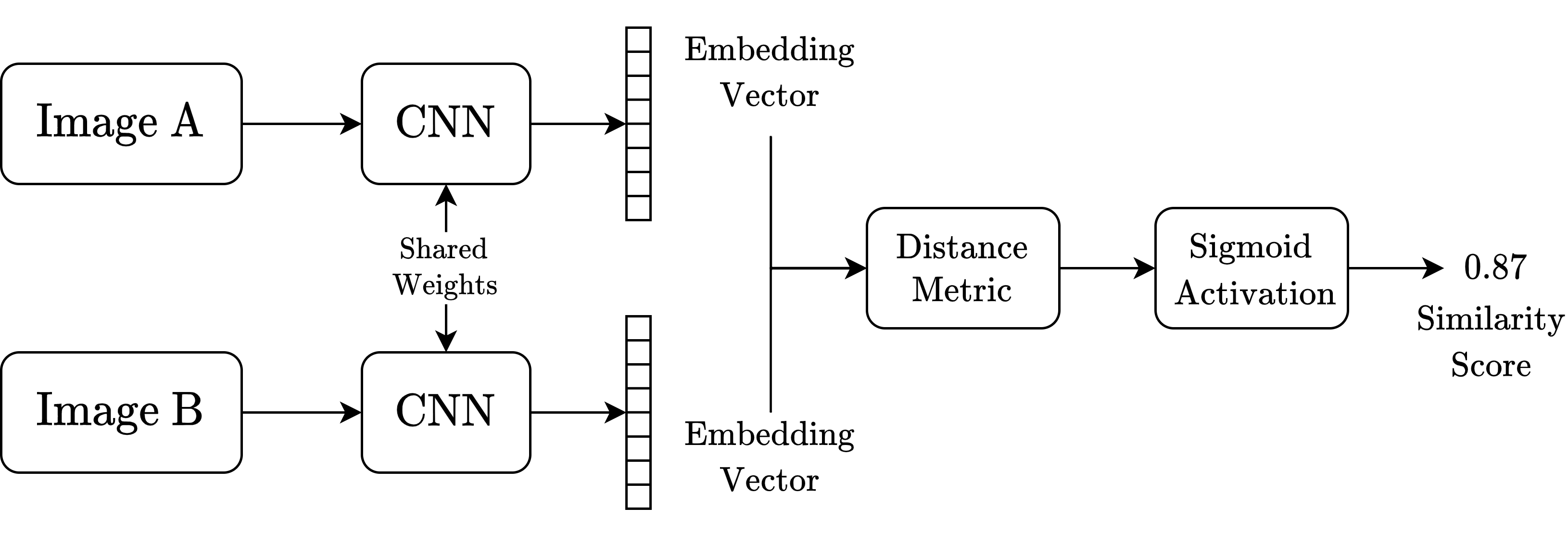

The distance metric was created by training a Siamese neural network (SNN) on a dataset of similar and dissimilar song pairs. Each song was first converted into a Mel Spectrogram to obtain a bitmap representation of each song. The SNN consists of two identical convolutional neural networks (CNNs) which are fed the Mel-Spectrogram bitmap of each song pair. By training the model on this dataset of song image pairs, the SNN learns to act as a distance metric between songs, based on the raw audio files. The SNN model achieved an accuracy score of 82% on the test set.

A web app was developed to evaluate the performance of the system with real users. Survey participants were required to first create a small library of songs they liked, and then proceed to rate the automatic recommendations provided by the system. The evaluation system used A/B testing, whereby the user would unknowingly rate songs from both the proposed system as well as a genre-based recommendation heuristic, to allow for meaningful analysis and evaluation.

Course: B.Sc. IT (Hons.) Artificial Intelligence

Supervisor: Dr Josef Bajada